Note: Since the publishing of this article, the Philips NutriU app has grown in scope and changed its name to HomeID. You can learn more about the project by visiting our work page.

Recommendation systems play a big part in our lives without us even realizing it. Famous web services like Youtube, Amazon, Netflix, and Spotify use them to make content suggestions tailored to a specific user, improving user experience by a significant margin.

These systems put together tailored playlists, suggest which movie to watch next, or reveal what products are often bought together.

Various services observe user actions like clicks and likes, and use the gathered data as input for the recommendation system to generate personalized recommendations. Using recommendation systems is a better and faster way of discovering relevant content for different people.

The award-winning mobile application NutriU is a companion app for Airfryers, blenders, and juicers. It allows users to control their appliances remotely but also helps them create and share recipes and find culinary inspiration.

When we set out to build a recommendation system for Philips’ NutriU, we realized that while there was plenty of literature online providing an overview of the algorithms and explaining how to train and evaluate such systems, there were scarce resources on how to build recommendation systems for production.

In this blog post, we take you behind the scenes of our project and show you how we’ve built a high-performance, fast and scalable recommendation system in a real-time setting.

A recommendation system for recipes

Besides being a companion app for controlling Philips’ smart kitchen appliances, an integral part of the NutriU app is to provide a platform for users to add, browse, and share recipes.

With the previous solution, the user would open the home screen or the search screen, and the app would show them a certain number of recipes from the database.

What criteria were the recipes being shown based on? They were sorted according to simple, manually done criteria and then displayed top-to-bottom.

There were a couple of shortfalls to this approach:

- All users would see exactly the same recipes (they are not personalized), therefore are not particularly interesting to the users

- The food recipe app didn’t use the data about user interaction with the app and the recipes they were looking at in any way

They were deteriorating the user experience, hence there was a need for a recommendation system. It would be implemented as REST API in Python using the famous Flask library.

Philips had a couple of demands for the recommendation system:

- Users should only get recommendations for recipes they had not seen before

- Predictions of recommendations would have to be dynamic and include users’ recent actions, which means these recommendations could not be precalculated

- Updates of the recommender model would have to be frequent and should not interrupt REST API in any way

Its majesty, data

For building a recommendation system, we needed its majesty, data. We were lucky that the NutriU already had an implemented collection of data about user interactions with the app-relevant recommendation system, such as recipe views, as well as recipe likes and comments etc.

We focused on making use of the data on recipe views. The amount of collected data for this interaction was more ample than for other interactions. Take into consideration that NutriU doesn’t have a “real” rating system, thus we only had implicit user feedback to work with.

NutriU used the Firebase platform for event tracking and analytics, so user interaction data was stored in BigQuery, the service which enables fast and interactive analysis of massive datasets. It also enables easy integration with Python’s most popular library for data analysis and manipulation–Pandas.

Acceptable execution time?

However, this is when we stumbled upon our first obstacle. Because we had to calculate recommendations dynamically, we had to fetch the data about user interactions every time anew. Although BigQuery runs blazing-fast SQL queries on gigabytes to petabytes of data, execution time for smaller queries is not that fast.

Let’s say you have 1 million records in your database. In that case, query execution time is around 2-3 seconds, which is amazing. However, if you have a lot less data, e.g. a hundred of records, query execution remains around 2-3 seconds. Not that fast anymore.

In fact, this pace was not acceptable for our recommender. Typically, using BigQuery as a main database in a web application is not suggested. So we had to build additional database which would regularly pull a relevant subset of data from BigQuery and communicate with REST API.

For teams dealing with this at scale, a lakehouse approach with Databricks offers a more integrated path by co-locating data, pipelines, and retrieval on a single, governed platform.

Lightning-fast recommendation calculus

There are several common approaches used for building a recommender system, the most popular being:

- Collaborative filtering: This approach uses collaborative information, i.e. implicit or explicit user interaction with items (such as recipes viewed or liked). It doesn’t use information about the actual items or users.

- Content-based approach: This approach works purely on user’s or item’s characteristics. They completely ignore interactions between users and items.

- Hybrid approach: A combination of the previous two approaches.

There are also other, more advanced approaches, such as matrix factorization and sequential models created with deep learning. Taking each approach into consideration, the team finally settled on collaborative filtering for multiple reasons.

For starters, collaborative filtering is very interpretable, making it simple to explain why some recipe was recommended. It is based on the principle: “If users A and B liked recipe X, and user B likes recipe Y, there is a huge chance that user A will also like the recipe Y”. Furthermore, other approaches require a lot more work fine-tuning the models for predicting meaningful recommendations, while collaborative filtering works on the plug & play principle.

We implemented collaborative filtering with an implicit library. This amazing library has a simple interface, comes with support for implicit feedback datasets and all its operations and routines are vectorized and multi-threaded, so recommendation calculus is lightning fast.

However, the collaborative filtering method will not yield quality recommendations for cold-start users, i.e. the users who have had no previous interaction with recipes. To them, the app will recommend the recipes with the most views.

Putting theory into practice

The recommender system is implemented by microservice architecture. It is comprised of two components:

- Scheduler

- Rest API

Collaborative filtering algorithm requires calculation of similarity matrix which is then used to produce recommendations. Similarity matrix calculation is a time-consuming task, which is why we decided to create a scheduled task which will execute the whole process of similarity matrix calculation:

- pull new data from BigQuery

- store BigQuery data into PostgreSQL

- use stored data to create similarity matrix

Rest API component responds to a user request for recommendations and returns the recommended recipes. We already mentioned that the model needs to be frequently updated without interruptions. Communication between the two components is achieved with Amazon’s S3 buckets.

The scheduler component generates the similarity matrix and saves it, while the Rest API component loads the generated matrix into the memory once it is generated for the faster serving of recommendations.

To avoid interruptions, Rest API runs a background job for loading new similarity matrix and switches it with the current one. We ensured that there could be only one job run in parallel.

We benchmarked recommendation request execution time for a cold-start user and for a non cold-start user. Specifically, we picked the user with the most interactions with the app. Benchmarks were created with 2,4 GHz Intel Core i7 processor:

- Execution time for cold start user is 0.1 s

- Execution time for non-cold start user is 0.05 s

Does the new NutriU work better?

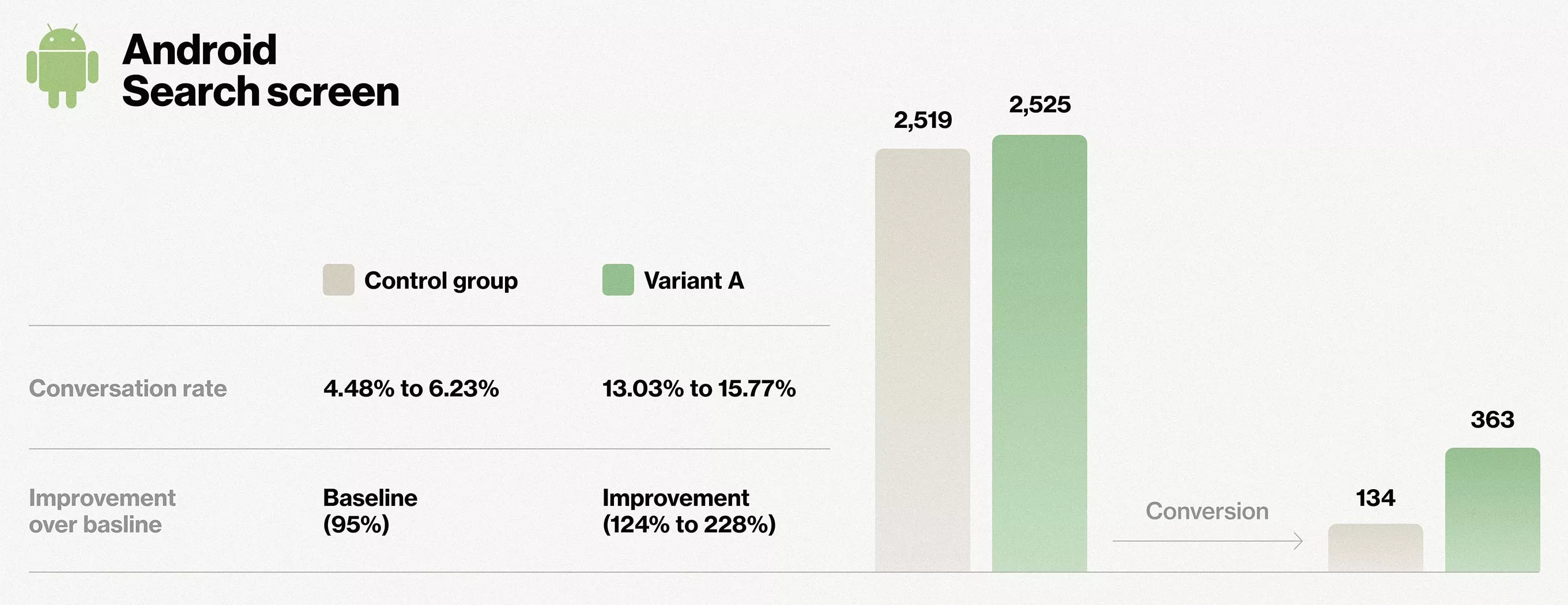

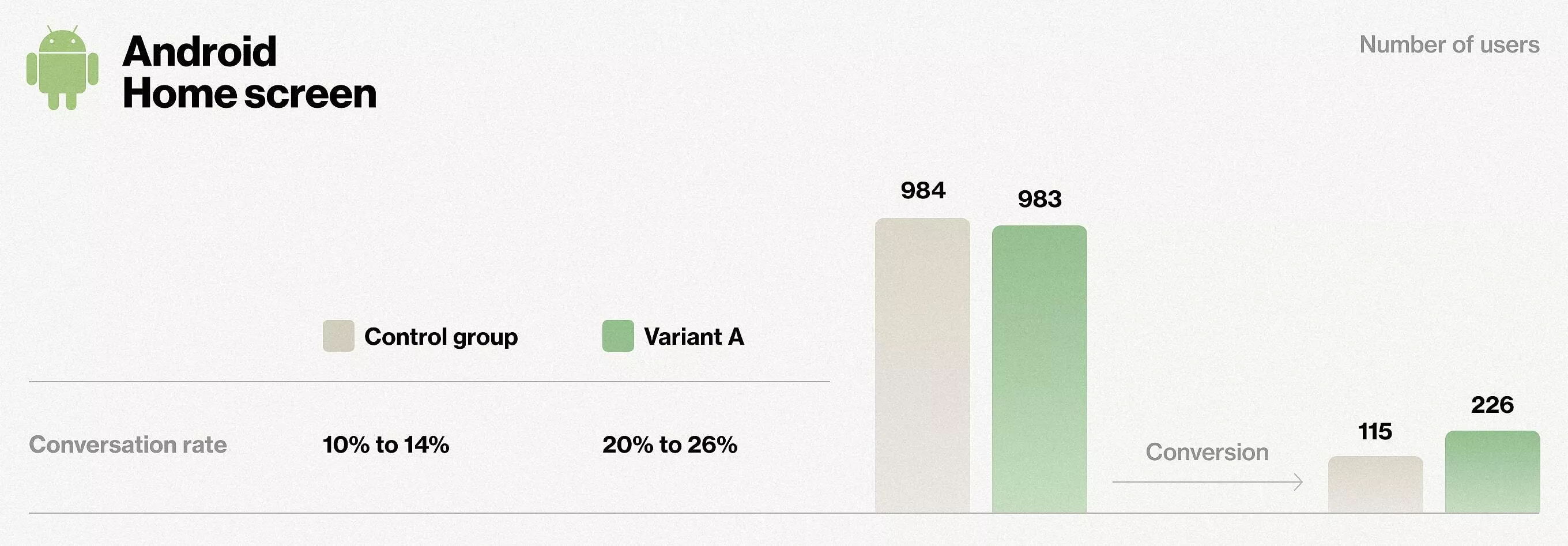

To see if our recommender beats the previous solution, we conducted A/B testing experiments. Here are the results for Android.

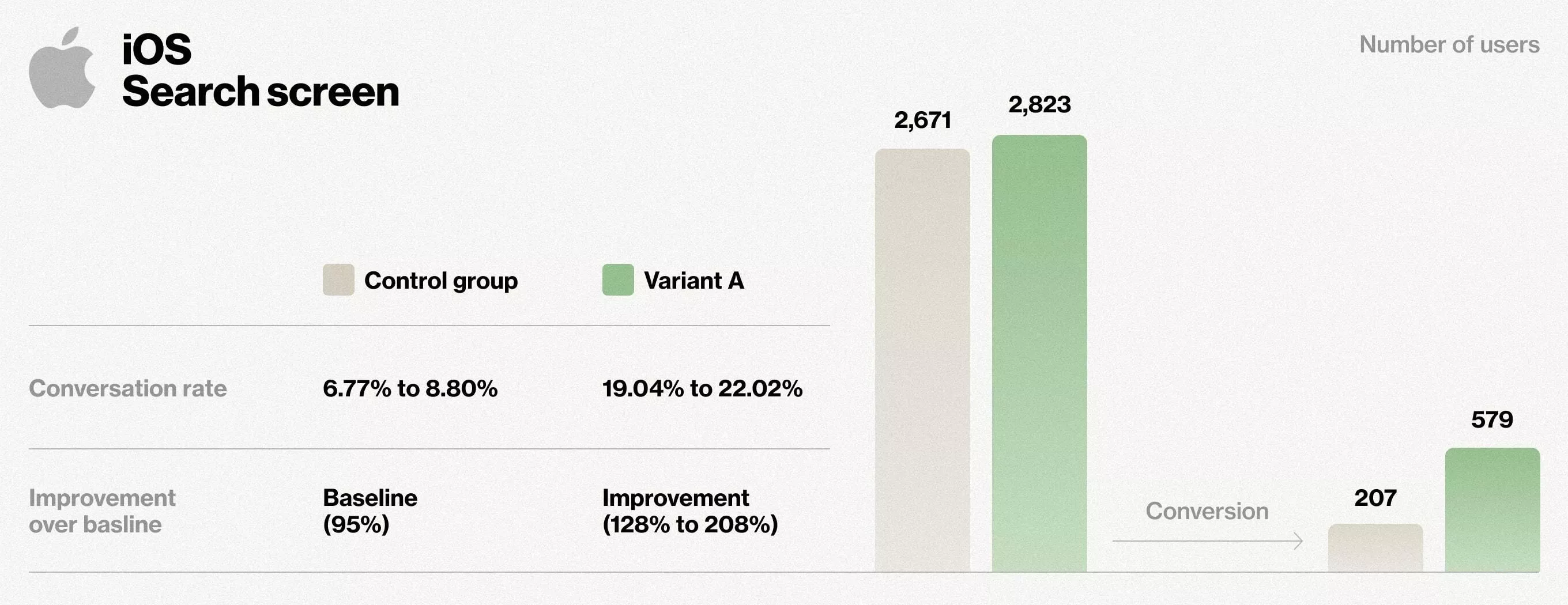

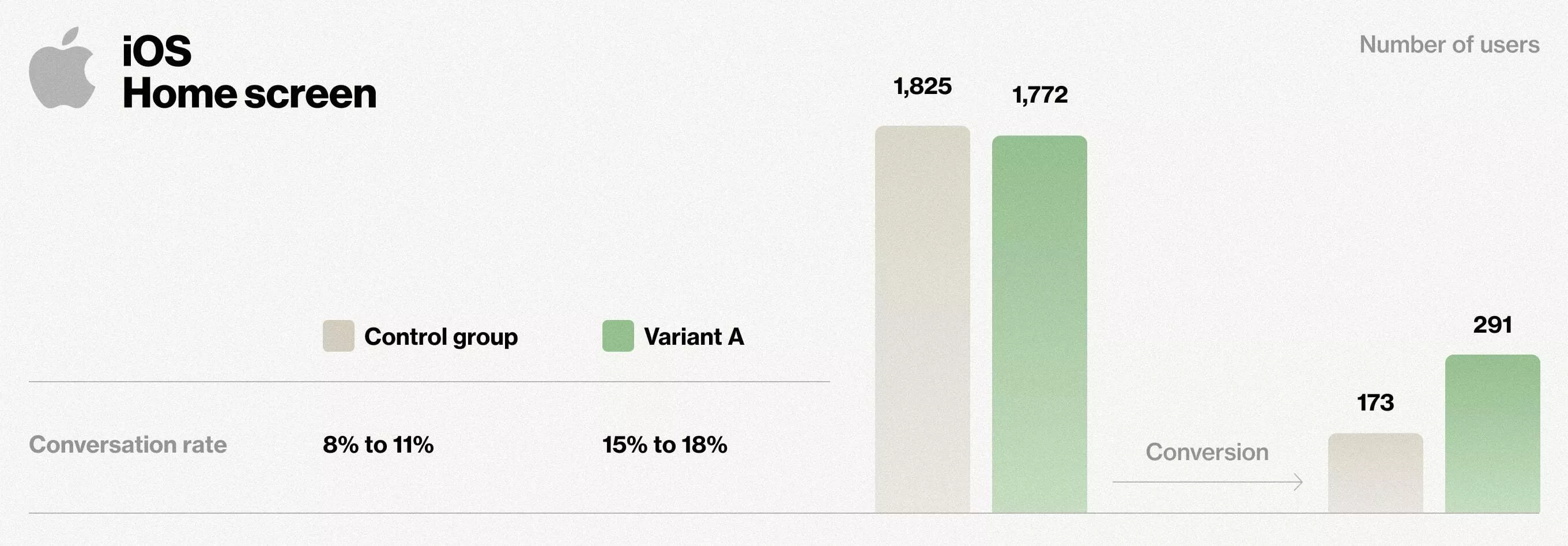

The following are the results for the iOS platform.

Experiment results are crystal clear. Our proposed variant performed 168% better for the search screen, and 70% better for the home screen.

A simple choice for an efficient result

The solution described in this post uses one of the simplest algorithms for recommendations. Yet, it achieved impressive results in A/B experiments with additional gains in the interpretability of recommendations and extremely fast execution.

The intended takeaway from this blog post is that with careful and structured implementation, it is possible to significantly improve users engagement by using simple algorithms and create the foundation for future, more complex improvements.

If you’d like to learn how else we can utilize machine learning and artificial intelligence to help you achieve your business goals, check out our AI business solutions page.