Budget overruns can and do happen on digital product development projects. We have a few tips to share if you’re wondering how to plan a web development project that won’t leave you broke due to unexpected fees and hidden costs.

Have you ever been shocked by a phone or energy bill that was higher than expected? Hidden costs and unexpected fees are frustrating, especially if you’re a business owner.

When you engage in a web development project, you are more likely than not to encounter problems or delays that are sometimes hard to pinpoint. It’s also not uncommon for these projects to go over budget. Sometimes the reason is out of your hands, but other times, this can easily be prevented by acting at the right time and making smart decisions.

During my more than 15 years of experience working with WordPress, I was met with a wide range of projects – some good, some bad, and some downright ugly. In this article, I’ll pinpoint the usual suspects that often raise the overall costs of a web development project and share my advice on avoiding them. Let’s delve into how to plan a web development project so that you avoid headaches, unwelcome surprises, and unexpected costs.

How to plan a web development project in 5 steps

What’s the project?

Let’s illustrate this with an imaginary project. Say your company produces high-quality speakers you have spent years developing and perfecting. You want a website to showcase your product and attract potential customers and investors.

Considering your business goals, a simple website that provides basic information about the product, like countless others, may not be enough. You desire something custom that distinguishes your product from the competition and catches people’s attention. After some research, you are set on the idea that your website should offer e-commerce functionality and provide an in-depth comparison of your speakers with your competitors.

This type of project requires more advanced web development and potentially includes some hurdles along the way.

How to ensure a successful start for your web development project

Putting your ideas down onto paper, and turning your website ideas into a reality, can seem like a daunting task. Here’s a short list of things to remember when building your brand, product, or service website. Of course, this list isn’t exhaustive, but these are some of the most important things to consider for any web development project.

Research your web development agency

Sometimes, you may come across agencies that offer cheaper services or know individuals who claim to “make websites”. While it may seem like an attractive option, I assure you that this type of engagement will cost you more in the long run.

This approach may be suitable if starting on a limited budget. However, implementing any changes becomes time-consuming as your company grows and evolves. Solo developers or small agencies may not have the necessary resources to support your growing company. They also might work with a “let’s make this fast and fix it later” mindset, which can lead to website crashes, code problems, legacy issues, and more.

To make sure you choose your future development partner wisely, we’ve prepared a list of questions to help you select.

Research the web development technology

One of the key factors that can determine the success or failure of your project is the technology stack used to build your website. Sometimes, companies opt for trendy or cutting-edge technologies without fully understanding their implications. While it may seem exciting to use the latest tools, it can lead to compatibility issues, limited developer expertise, and increased development time and costs.

If you’re unsure about the technology decisions, don’t hesitate to consult with experienced professionals or seek advice from reliable sources.

It’s essential to thoroughly research the technology options available and choose ones that align with your business requirements and long-term goals. Ensure that you and your development team clearly understand the technology choices and their potential limitations. Consider factors such as scalability, security, ease of maintenance, and community support. This is also an excellent time to research the YAGNI (You Aren’t Gonna Need It) principle. Trust me, you will thank me later.

Provide a detailed web development project brief

A common pitfall in your role as a client is not providing a comprehensive and detailed project brief to the development/design team. Without a clear roadmap and understanding of your expectations, the chances of miscommunication and divergent results increase significantly.

A good project brief should include:

- a comprehensive description of your website’s features

- design guidelines

- target audience

- desired functionalities

- project timelines

A well-defined project scope will help the development team deliver the expected outcome and make it easier to track progress and identify any deviations early in the development process.

However, if you’re worried you won’t be able to come up with all the details yourself, any good agency will know how to help you figure these things out early on. At Infinum, we prefer to kick off projects with product discovery workshops, in which we build the plan and project outline together.

Good agencies will always be there to support you. After all, it’s part of the service you’re paying for. Of course, it’s important to remember that you must bring expertise to the table about your brand or product so that the product strategy we map out matches your business goals and ultimately brings you value.

Listen to your web development agency

While you may have a clear vision for your website, it’s crucial to listen to the advice and recommendations of the agency you’ve hired. As experts in their field, they can provide valuable insights and suggestions that can enhance your project’s success.

Engage in open and transparent communication with your development team. Be receptive to their feedback and ideas, and work collaboratively to find the best solutions for your project. Sometimes, clients can be rigid in their demands, but balancing your vision and the agency’s expertise is essential.

For example, a feature will be finished in approximately 30 days. If your agency proposes a minor alteration to the design and functionality that could reduce the time to 10 days, it is advisable to consider that option. You have chosen the agency based on their references and expertise, and you can trust that they wouldn’t suggest a change unless it is the best course of action. Remember, the development team wants your project to succeed just as much as you do, so fostering a cooperative working relationship will lead to better results.

Be cautious about spending too much on your web development budget

When embarking on a significant project like building a new website, it’s wise not to invest all your resources at once. Instead, together with the project team, devise a plan for an iterative approach and break your project into manageable phases. This allows you to assess the progress, receive feedback, and make adjustments before moving on to the next phase.

By dividing the project into smaller milestones, you have better control over the budget and can make informed decisions based on the outcomes of each phase.

This approach also enables you to validate the project’s direction and make necessary course corrections early in the process. It also minimizes the risk of pouring substantial resources into a project that might not yield the desired results.

Bumps in the road you may not have thought about (yet)

Typically, it’s not the primary features that deplete your project funds – since you probably planned them beforehand – but the concealed details you or your agency overlooked during the initial estimate. Selecting your agency carefully will likely eliminate the need to be concerned about this, but conducting your research beforehand is always a good idea.

Inadequate security measures

Neglecting to implement robust security protocols can expose your website and sensitive user data to potential threats. Data breaches and cyber-attacks can have severe financial and reputational consequences for your company.

Poor performance optimization

Ignoring performance optimization can result in slow-loading webpages, leading to a higher bounce rate and dissatisfied users. Website speed and responsiveness are critical factors in user experience and search engine rankings.

Lack of mobile responsiveness

In today’s mobile-oriented world, having a website not optimized for mobile devices can be a significant setback. A large portion of your audience may access your website through smartphones and tablets, so ensuring mobile responsiveness is vital.

Underestimating content creation

Clients often underestimate the time and effort required for content creation. Developing high-quality, engaging, and SEO-friendly content can be time-consuming. Delays in content submission can hold up the entire website development process. If you require multi-language support, multiply this by the number of languages you need.

Overlooking backup and recovery plans

Website data loss can be disastrous for a business. You should ensure proper backup and recovery plans to safeguard your website and its data.

Overlooking ongoing maintenance

A website requires regular maintenance, including software updates, security patches, and content updates. You should be prepared for the ongoing commitment to keeping your website running smoothly.

Rushing the launch

Launching a website prematurely without thorough testing can lead to embarrassing glitches and negatively impact your brand reputation.

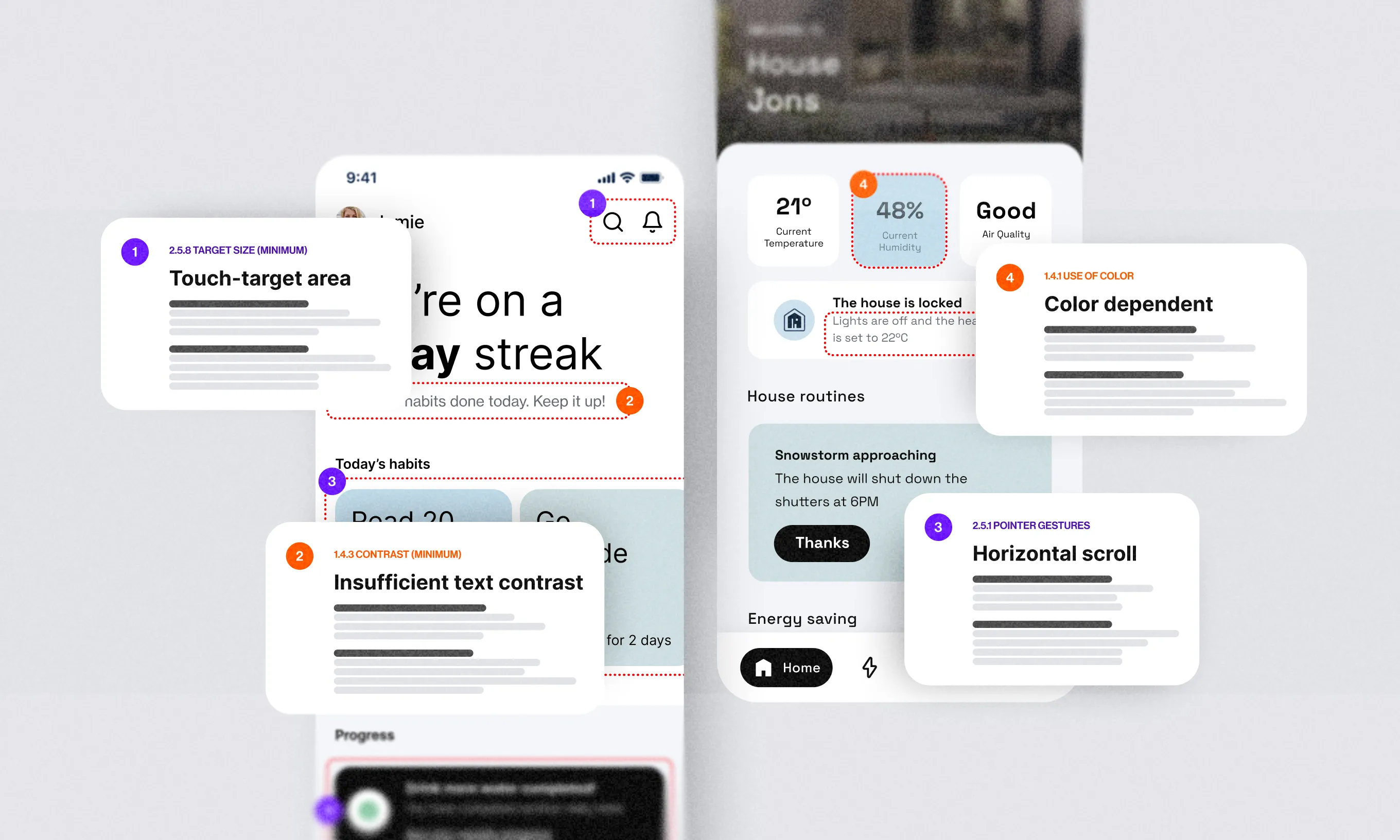

Accessibility

By investing in accessibility, more people can use your website. It’s important to keep in mind that regulations regarding accessibility are on the way. It’s generally more costly and less effective to add accessibility features retroactively.

This is how we do it

At Infinum, we understand the significance of well-executed projects and their impact on your company’s financial health. Here’s how we ensure project success:

Comprehensive analysis

We conduct an in-depth analysis of your business goals and requirements to recommend the most suitable technology stack and development approach before we even start.

Collaborative planning

We work closely with you to create a detailed project brief, breaking down the project into achievable milestones and setting realistic timelines. This is called a workshop.

Transparent communication

Our team values open and transparent communication, ensuring you are regularly updated on the project’s progress and involved in decision-making. In essence, you will know everything that is happening at all times.

Iterative development

By following an iterative development process, we deliver incremental results, enabling you to provide feedback and make adjustments throughout the project. Sprints, planning, working in an agile way, we do it all.

Rigorous testing

We prioritize rigorous testing and quality assurance to identify and rectify issues before the website’s launch, ensuring a seamless user experience.

How to plan a web development project, the smart way

Creating a great product takes time. By conducting thorough research and choosing your agency wisely, you can avoid the pitfalls of low-cost, inexperienced options. If you are serious about your business, avoid rushing to the finish line.

We’ve identified some of the most common issues on web development projects and what you can do to anticipate and prevent them. Thorough research, prioritizing clear project briefs, open communication, and incremental development ensure better alignment with your team, reduce misunderstandings, allow for timely adjustments, and in the end, save you money.

No one likes hidden fees and unexpected costs. If you want to ensure you don’t break the bank with your next web development project but rather invest wisely – drop us a line, and let’s talk.