A hardware sampler is a device that takes recorded pieces of audio data called “samples” and can play them back on a key press. While the history of hardware sampling is interesting, it is fair to say that, due to their flexibility, software samplers nowadays prevail.

A software sampler does exactly the same thing as a hardware sampler, but with a lot more freedom. And while Apple hides its Sampler in GarageBand and Logic Pro, they do expose the Sampler audio unit and its wrapper AVAudioUnitSampler. This seems to be a “Lite” version of their Pro tools Sampler.

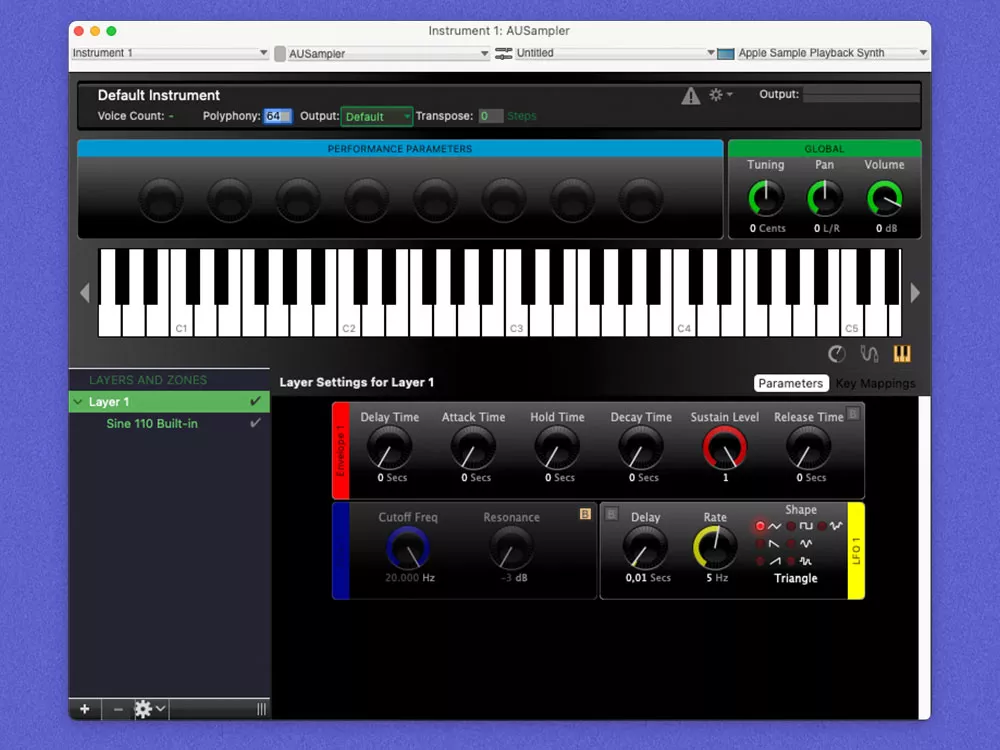

The AUSampler plugin can be found in both Logic and GarageBand as a Plugin. In GarageBand, Go to Track → Plugins → AU Instruments → Apple → AUSampler.

This article is only the beginning. We continue our exploration of Apple’s sampler audio unit in the next blog post in the AUSampler series, where we test it to see if the promise of AVAudioEngine’s dynamic connection abilities holds true.

The trouble begins

Don’t think it’s simple. It is a rich and complex component that can achieve a lot and get you very far. Some of its features include:

- Streaming samples from a disk

- Sharing samples in the memory

- Voice-stealing capabilities

- Zone mapping

Apple first introduced this API in iOS 5 and improved it further with the introduction of the AVAudioEngine API in iOS 8. Unfortunately, it is largely undocumented. There are two (very useful) tech notes, one about loading instruments and the other one about controlling the sampler in real-time. Additionally, there are a few old WWDC videos, but some of them are from 2012 and Apple has removed them from their site, so you’d need to get them from other sources. And that about covers it.

Luckily, Apple released the AU Lab app that includes the AUSampler plugin and showcases many of the sampler features. It doesn’t just showcase them, it’s an essential tool for importing, editing and exporting your instruments in AUSampler’s native format.

AU Lab is a great simple app that includes other audio units, such as AUAudioFilePlayer, and many effects. It allows you to play with them in a simple interface. A great tool for comparing the output of your setup with the expected output.

This app will prove fundamental in building our understanding of some more intricate (not to say buggy) behaviors. And Sampler is not short on those.

Sampler crashes when not attached to the engine

What do you think will happen in the following case?

var sampler: AVAudioUnitSampler? = AVAudioUnitSampler()

esampler.loadPreset(at: url)

sampler = nil

The app will crash with:

ASSERTION FAILED: unbalanced reference count

To work around this, you will need to attach the sampler to the engine before deallocating it.

let engine = AVAudioEngine()

var sampler: AVAudioUnitSampler? = AVAudioUnitSampler()

sampler.loadPreset(at: url)

engine.attach(sampler)

engine.detach(sampler)

sampler = nil

We have filed this bug to Apple under FB10136468, and this was fixed in macOS 13 Beta 4.

Open file limit

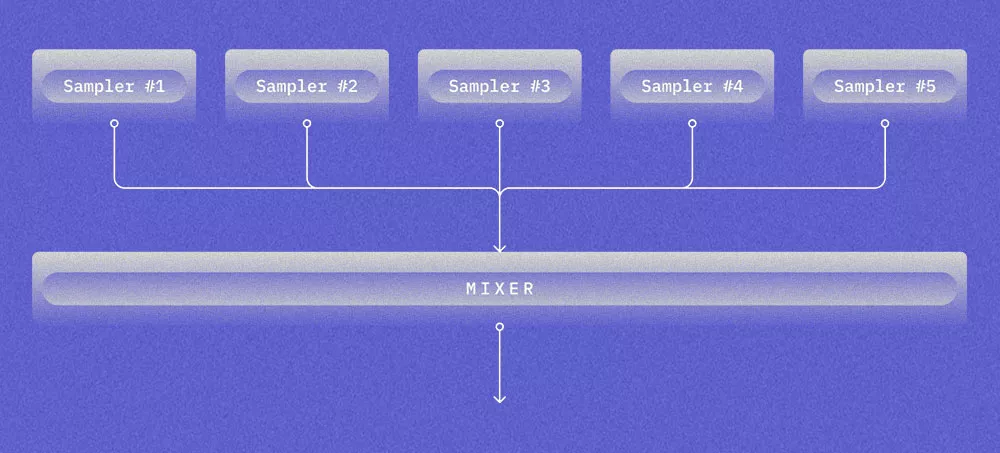

Imagine you have the following setup:

Each Sampler loads a different instrument with 50 samples. What do you think will happen?

At some point, when loading an instrument to one of the samplers, you will get:

SampleManager.cpp:434 Failed to load sample 'sample.caf': error -42

This is not an error in the sampler itself, but an OS that tries to load too many files at the same time. The default limit for the maximum number of open file descriptors per process is 256. You can verify this by running:

ulimit -n

This can be fixed by increasing the limit:

var limit: rlimit = rlimit()

getrlimit(RLIMIT_NOFILE, &limit)

limit.rlim_cur = limit

setrlimit(RLIMIT_NOFILE, &limit)

getrlimit(RLIMIT_NOFILE, &limit)

Hidden Voice Count limit

Imagine you have a Sampler with 50 samples. Each note triggers all of them. What will happen if you sustain a lot of notes?

Well, it turns out that there is a limit to the number of concurrently playing samples. It is 128 on iOS and 256 on macOS.

But what do you expect will happen when the limit is reached? Probably that voice stealing will kick in and kill the very first note. But no, it actually ignores any new notes sent to the Sampler.

We have filed this bug to Apple under FB9835828, and this was fixed in macOS 13 Beta 4.

Please note that there is a distinction between Voice Count and the number of playing samples. Voice Count of 1 can represent many concurrently playing samples.

How do you know if a very quiet sample is playing? AULab’s Voice Count indicator is very useful in this situation.

To tackle those, we will need to go off-road and perform somewhat unconventional steps:

- Disassemble frameworks of interest

- Attach a debugger to AU Lab

Frameworks layout

To prepare ourselves for this quest, we first need to have a high-level overview of the frameworks involved.

AUSampler plugin

When you load AUSampler Audio Unit Instrument in AU Lab, you are presented with the UI that controls it. An Audio Unit can expose the UI, and what we see in AU Lab is AUSampler’s UI.

You can load AUSampler’s UI from your apps by using the requestViewController API on AUSampler audio unit.

Presenting an Audio Unit’s custom UI from your app is a great way to explore the unit. Unfortunately, the rich view is only available in macOS and missing in iOS.

AUSampler’s UI, as well as the UI’s of many other Apple Audio Units, is implemented in:

/System/Library/Components/CoreAudio.component/Contents/PlugIns/CoreAudioAUUI.bundle/Contents/MacOS/CoreAudioAUUI

Sampler implementation

The implementation of Sampler Audio Unit in iOS can be found here:

~/Library/Developer/Xcode/iOS DeviceSupport/{IOS_VERSION}/Symbols/System/Library/Frameworks/AudioToolbox.framework/libEmbeddedSystemAUs.dylib

In macOS, it can be found here:

/System/Library/Frameworks/CoreAudio.framework/Versions/A/Resources/

The dylib is not there but in a shared dylib cache. Additionally, the macOS version doesn’t expose any symbols, which makes debugging/reverse engineering much more difficult.

Reversing voice count functionality

Let’s move to the useful part and find out how Voice Count is implemented in the AUSampler plugin.

Attaching to AU Lab

The very first step that we need to do is to attach the debugger to the AU Lab application. We can do this in the following way:

1

Start the process

2

In Xcode, go to Debug -> Attach to Process and find our process

If you try to do this, it will most likely fail because we are not allowed to attach the debugger to any binary. There are few options:

- Disable System Integrity Protection

- Resign AU Lab

- Install virtual machine and disable SIP

In this case, resigning is probably the easiest way to achieve what we want:

codesign -f -s "Apple Development: …" “~/Desktop/AU Lab Copy.app”

Attaching the debugger should now work. Now that we are inside, what should we do?

We could do this step by step, but we will take a shortcut and guess that it has to do with AudioUnitGetProperty.

Let’s set a breakpoint to it.

rb "AudioUnitGetProperty$" --command "po $rsi" --auto-continue 1

Intel Architecture

rb "AudioUnitGetProperty$" --command "po $x1" --auto-continue 1

ARM Architecture

- rb – create a regex breakpoint

- –command – execute a command. Print $rsi which is a second argument to a function (on Intel) and property id that we are interested in

- –-auto-continue – do not stop on a breakpoint

This gives us a secret voice count property id which is 4104 in this case. We are now able to implement this in our own code:

AudioUnitGetProperty(

audioUnit,

4104,

kAudioUnitScope_Global,

0,

&count,

&countSize

)

Only the beginning

With this blog post, we’ve just scratched the surface on Apple’s mysterious sampler audio unit, powerful yet underexplored.

We set the stage and learned how to use a simple toolbox to tackle more of Sampler’s intricacies.

You can find the associated source code here.

Stay tuned for more.