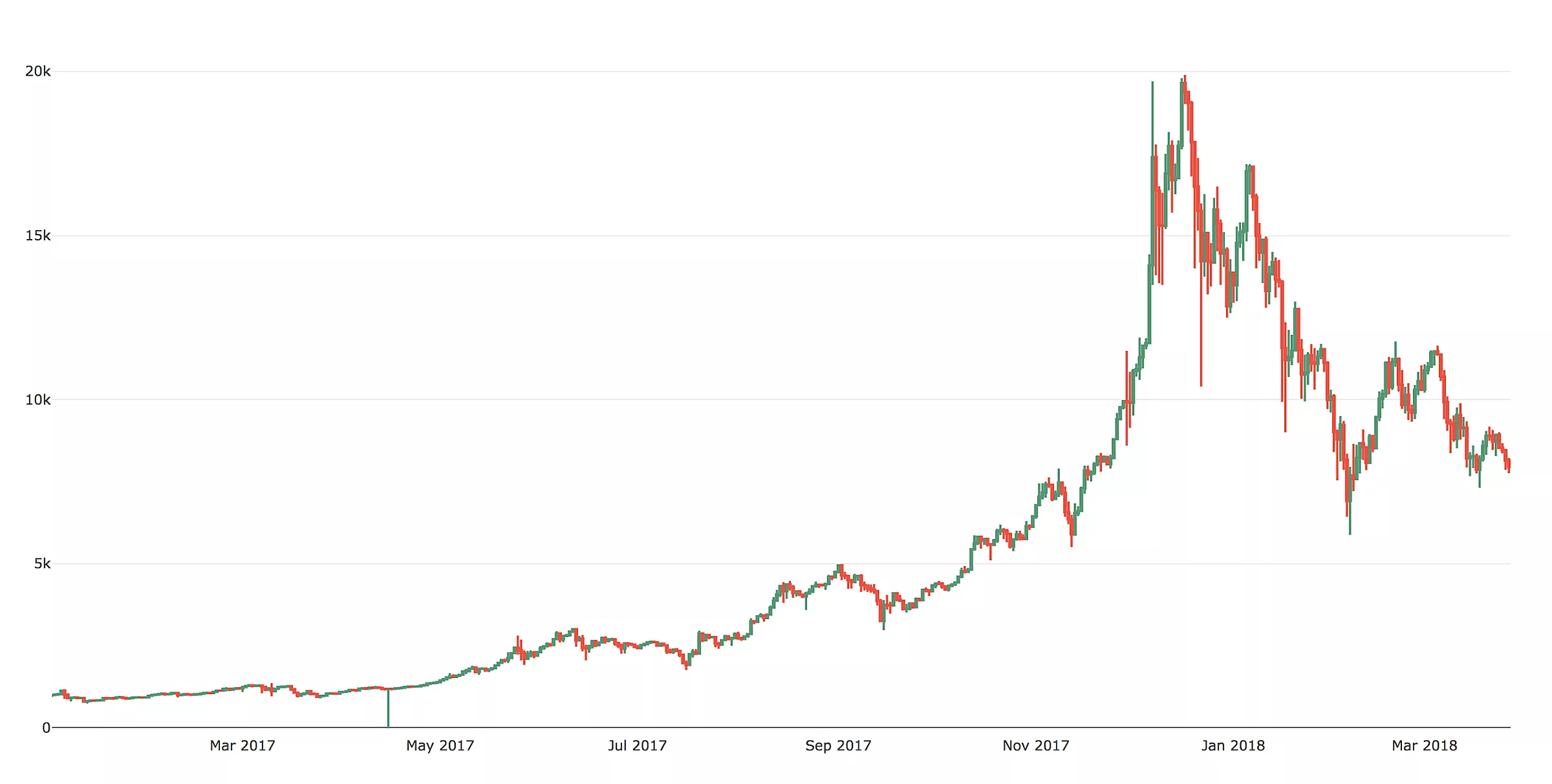

Investing into crypto currencies became one of the most popular topics in 2018 – a case in point is how the search term “cryptocurrency” on Google skyrocketed at the end of 2017.

Why did cryptocurrency shoot to fame?

It was an opportunity to earn a lot of money in a short period of time and nobody wanted to miss it. Many people bought a cryptocurrency without really knowing what it is, where its value comes from, and how the economy works in general.

The fear of missing out drove the price of Bitcoin to almost $20.000 in December of 2017. A crash was inevitable and we didn’t want to risk it with that kind of investment.

In order to develop a smart automated trading system which would mitigate the risk of a big loss, we had to learn the basic concepts of trading and different types of strategies which we could utilize.

A word about investing

The definition of investing is committing money or capital to an endeavor, with the expectation of obtaining an additional income or profit. Depending on the time period of holding a security, there are two different types of investment: long-term and short-term investment.

We were interested in short term investing.

Short-term investing also contains a couple of strategies, the most popular being high frequency trading (HFT), day trading and swing trading.

Each of this strategy is specific and has its own pros and cons:

- High-frequency trading doesn’t work so well with cryptocurrencies because transfers between exchanges are slow, most exchanges don’t have an appropriate interface for HFT, and opportunities for profit are reduced by relatively large fees.

- Day trading or swing trading are similar and simpler to set up, so we decided to make a day trading system. There were significant price changes in just a matter of minutes which we could exploit. The risk in such a volatile market is high but there are also many opportunities for profit.

Creating a day trading system

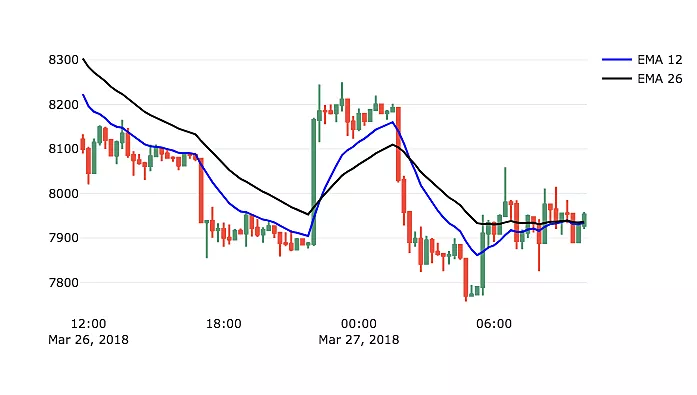

We did many simulations with different parameters for technical indicators and time intervals to generate buy and sell signals. Simulations showed some promising results, but the main problem were sudden changes where indicators couldn’t capture fast price movements in a short period of time.

Technical indicators often produce signals with delay and that is why they work better for longer and stable trends.

Even the best set of parameters often ended up performing worse than a market when we included exchange fees into our calculations.

Deep learning for solving complex tasks

This initial step has been done in order to get familiar with cryptocurrency trading, financial data and exchanges.

Like cryptocurrencies, deep learning has lately been a very popular approach for solving complex tasks. It is used in natural language processing, image classification, self-driving cars, recommendation systems, etc.

Like a human brain, a deep neural network has layers of artificial neurons.

When a neuron fires, it transmits information to connected neurons in the next layer. During learning, connections in the network are adjusted to output expected results. A process of learning is often referred to as training.

The idea was to use a deep neural network to find patterns in price movements (if they exist) and predict the price in the near future.

If a neural network was able to predict price with a good accuracy, we could use that information to make decisions to buy or sell.

How did we do it?!

The definition of the problem was the prediction of the next value in a time series.

We decided to use LSTM network which has been successful in tasks where the output of the neural network depends on inputs from previous steps. LSTM is a special kind of recurrent neural network (RNN). It has the ability to remember “long-term dependencies” between previous information and the present task.

In our case, the price depends on previous values, and price movements could have been affected by different events in the past. For example, a big crash could happen in the next 15 minutes because there was a long exponential uptrend which caused an overbought state.

The data set included price and volume from January 8, 2015 to March 27, 2018 aggregated per 15 minutes from the GDAX exchange. We decided to use 80% of the data for training and the remaining 20% for validation.

An important step in deep learning is data preprocessing

Preprocessing may improve results or make training faster. To make all features equally important at the beginning of the training, it is a good idea to normalize input data.

Based on the recent 20 values, our model should have predicted the next close price.

First of all, we created batches of 20 consecutive values and then used the first value in each batch to scale data in the corresponding batch by applying the formula p(t) / p(1) – 1.

Our network model has been made of two hidden LSTM layers containing 128 neurons with activation function connected to one neuron in the output layer. Each layer used tanh activation function. Also, after each LSTM layer we added a dropout of 0.3 to prevent overfitting. Implementation was written in Python using Keras framework with Tensorflow backend. The model has been trained for 100 epochs.

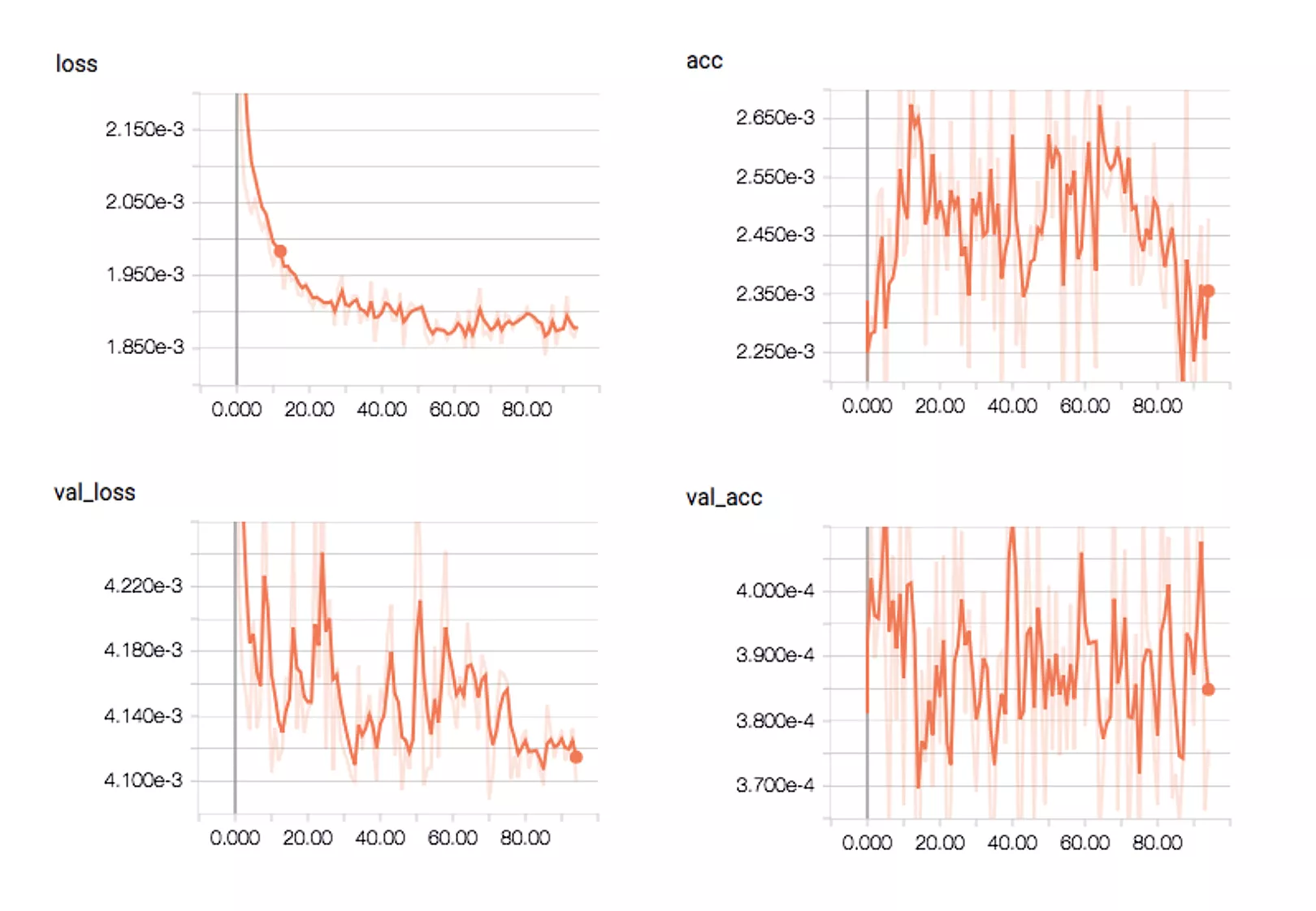

Training progress has shown that the model was able to minimize mean absolute error, but accuracy didn’t improve and it had a large variance. Additionally, the model was performing much better on the training set in comparison with validation set.

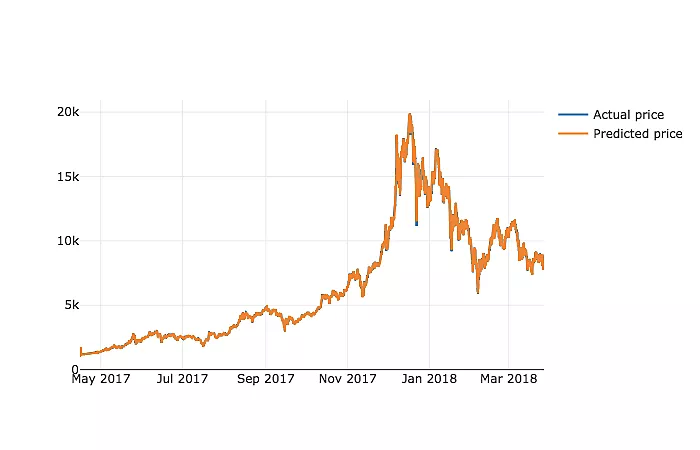

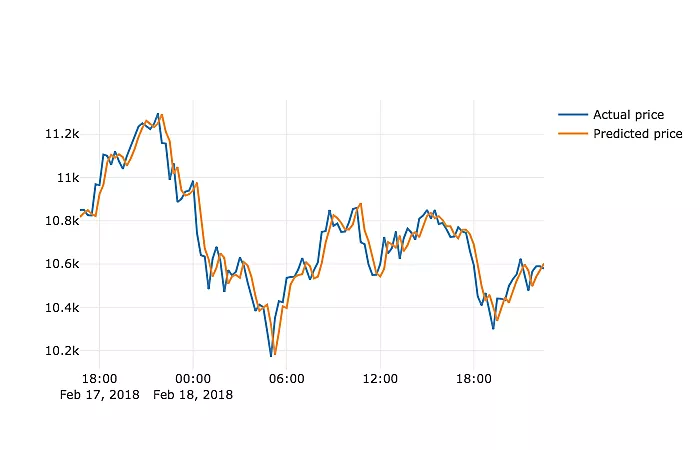

We used a validation dataset to make price predictions. Despite very well-aligned graphs of predicted and real prices, a detailed view has shown that predictions are not very useful. The model has learnt to predict price which is almost the same as price one time period ago.

In other words, when you ask the model what the price will be in 15 minutes, it will take the current price and give you that as the answer.

If you look on the Internet at articles about predicting cryptocurrency prices with LSTM networks, in a few of them you will find similar results, while most others just want to explain how to use LSTM networks. They do not use predictions for making trading decisions and that is probably the reason why detailed results analysis is not included.

We tried to train the network with different sets of hyper-parameters in hope of getting better results, but eventually, the network has always learned the same strategy. The articles linked in the previous paragraph mention some reasons which suggest that historic prices only are not enough to accurately predict the future price.

The next step to improve the model would be collecting and using more data, for example, sentiment indicators. That would require a lot of additional work, and in the end, wouldn’t guarantee good results. With some experience in deep learning, we saw another approach that would eliminate the need to make trading decisions by ourselves.

The main goals we wanted to achieve were:

- automate the whole process of trading

- maximize profit

We realized it’s the kind of a problem where reinforcement learning could yield more success.

Reinforcement learning for maximizing cumulative reward

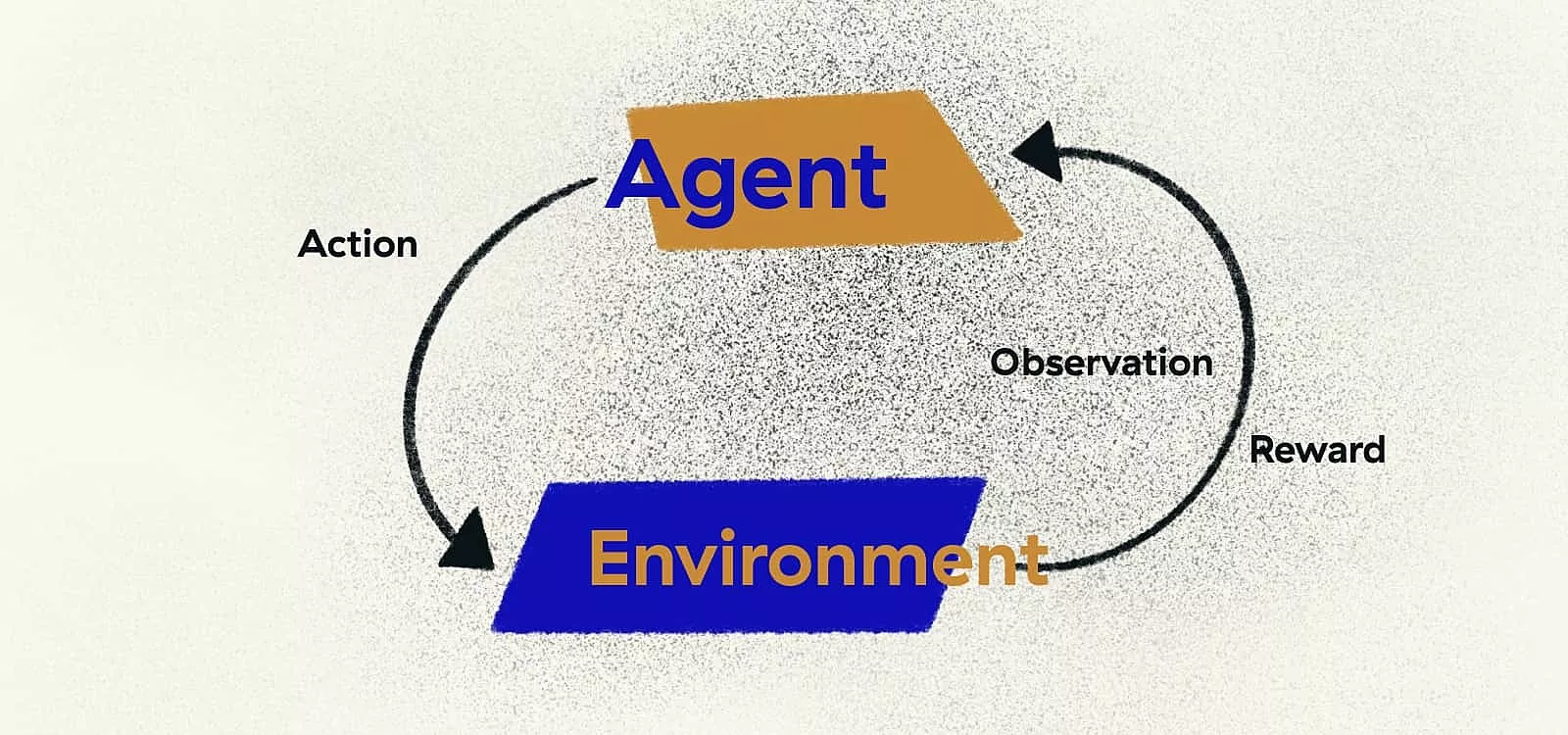

By using reinforcement learning, we could define those two requirements and let algorithms learn how to trade profitably. It is mainly used to learn agents to take actions in an environment to maximize cumulative reward.

Basic idea is following:

1

Agent observes current state of an environment

2

Agent makes a decision to take an action – with probability it takes a random action instead

3

Agent receives new state and reward for the taken action

4

Agent observers that experience and learns from it

5

Agent decreases probability to take a random action

At the beginning, the agent is exploring the environment and it mostly takes random actions. From experience, it concludes which actions are good in different situations in that environment.

As the agent learns more, it takes less random actions and starts to take actions that are going to maximize the reward. The function which makes a decision to take action takes into account future rewards. Observing only the current state and immediate reward doesn’t necessarily lead to the best result.

A real-life example would be hiring an inexperienced employee. Immediately, it produces new expenses for the company. You need to teach him specific technology, onboard him into processes and existing projects. As time progresses, he is going to become productive and independent and he will be a great asset for the company.

More curious readers can find details about different techniques and mathematical background in a great RL course by David Silver.

In our case, the environment is a cryptocurrency exchange, while an agent has to make decisions to buy, sell or take no action in each time step. To make the environment more compliant with Markov property, which is a requirement for RL algorithms to achieve good results, input into the agent are a series of latest prices and momentum indicators. An agent internally uses deep neural network to learn to take actions in order to maximize return based on observations from an environment.

The policy gradient agent has been trained for 5 million episodes. By using a model of an environment with fees and short time steps, the agent has often learnt a strategy where it doesn’t trade. If you take a look at price change every 15 minutes, you will realize that change is rarely higher than 0,3%. Strategy not to trade makes the most sense in that case because you are not spending money on fees.

Depending on the interval which was used for training, the agent learnt to buy and hold strategy or not to buy at all.

That leads to the conclusion that such an environment is not very convenient for day trading and that long-term investment might be a better option. Enlightened by those results, we trained the agent with 60 minutes time step. Results were much better.

Since we used a training set to evaluate the agent, we wanted to see how it will perform in real situations. We implemented a “paper trading” system and let the agent trade with hypothetical money. Unfortunately for all cryptocurrency investors, in the last 2 months there has been a general downtrend.

The agent did save some losses but when you are playing with real money, you probably want to avoid investing in something like that.

Reinforcement learning performed better than price predicition

Machine learning is a great tool to automate complex tasks which only humans could do in the past. Its algorithms can capture patterns in the data which are sometimes not visible to humans.

While the price prediction algorithm did not produce successful results, reinforcement learning performed way better. Often it is very hard or impossible to explain outputs from the neural networks and that is why you need to perform a lot of testing and ensure that an unexpected result from ML algorithm will not cause a lot of problems for you. In some other cases, such results will not make you harm.

In this particular case when you are investing your money, you probably want to have strong rules which are going to prevent big losses.

We walked away with knowledge

There were other options to improve the performance of the system. One such option could take into account already mentioned sentiment indicators. With machine learning it would be possible to determine sentiment about cryptocurrency from news and tweets and let the algorithm use that data as well to determine actions to take.

As the cryptocurrency market was not showing promising returns at that point, we wrapped up our experiment. The takeaway: Learning about different approaches of machine learning was a great investment.