If you google “AI in software testing” you will come across a plethora of articles that try to guess what the future will bring, but none that have a clear idea about what AI testing really is.

I want to make something clear right from the get go– the question posed in the title of this blog post does not have a definitive answer yet. Yet, it’s a hot topic that’s generating a lot of buzz on the blogosphere and at software testing conferences around the world.

Some believe that AI will magically change the way we approach testing and quality assurance.

The folks at Test.ai certainly think it will. While doing research, I came across an interesting statement on their webpage that lists all the staff working on the project. One of the team members was described as “Hard working, never going to give up and will never break down!”

His name: Bot ac:78:b7:01:90:f1

Jason Arbon, the CEO of Test.ai has an interesting prediction regarding AI in software testing. He thinks that by 2027, humans will no longer be an essential ingredient of the testing formula.

Is AI our new BFF?

Before we kick off the discussion, we must come to terms with the basic character flaws that the current efforts in AI-driven testing share.

These are the lack of insight into the source of truth, which is usually held in sparse documentation, test cases, or the minds of product owners, stakeholders, developers, and end-users. Another, quite serious defficiency is the inability to empathize with end-users, which helps human testers have an emotional response towards confusing flows, slow loading times, awkward layouts, etc.

In any case, AI needs our guidance to provide novel insights. Is this the future that we are nearing? Towards AI that is going to replace the labor of manual testing and become our clingy BFF that constantly expects us to provide guidance and counseling throughout the course of our relationship?

And even if this does become our reality, will it put us in the position of almighty overlords that are constantly monitoring, inputting, fine tuning and evolving the “artificial brain” of our new BFF with what is correct and incorrect behaviour, what is an exciting feature and what is just plain strange?

AI-powered tools of today

As of yet, there are no magical tools with AI powerful enough to learn by itself and evolve over time without human intervention. However, there are some tools out there that harness the power of AI to facilitate and speed up testing, and use it to help out with task execution.

Here are some examples:

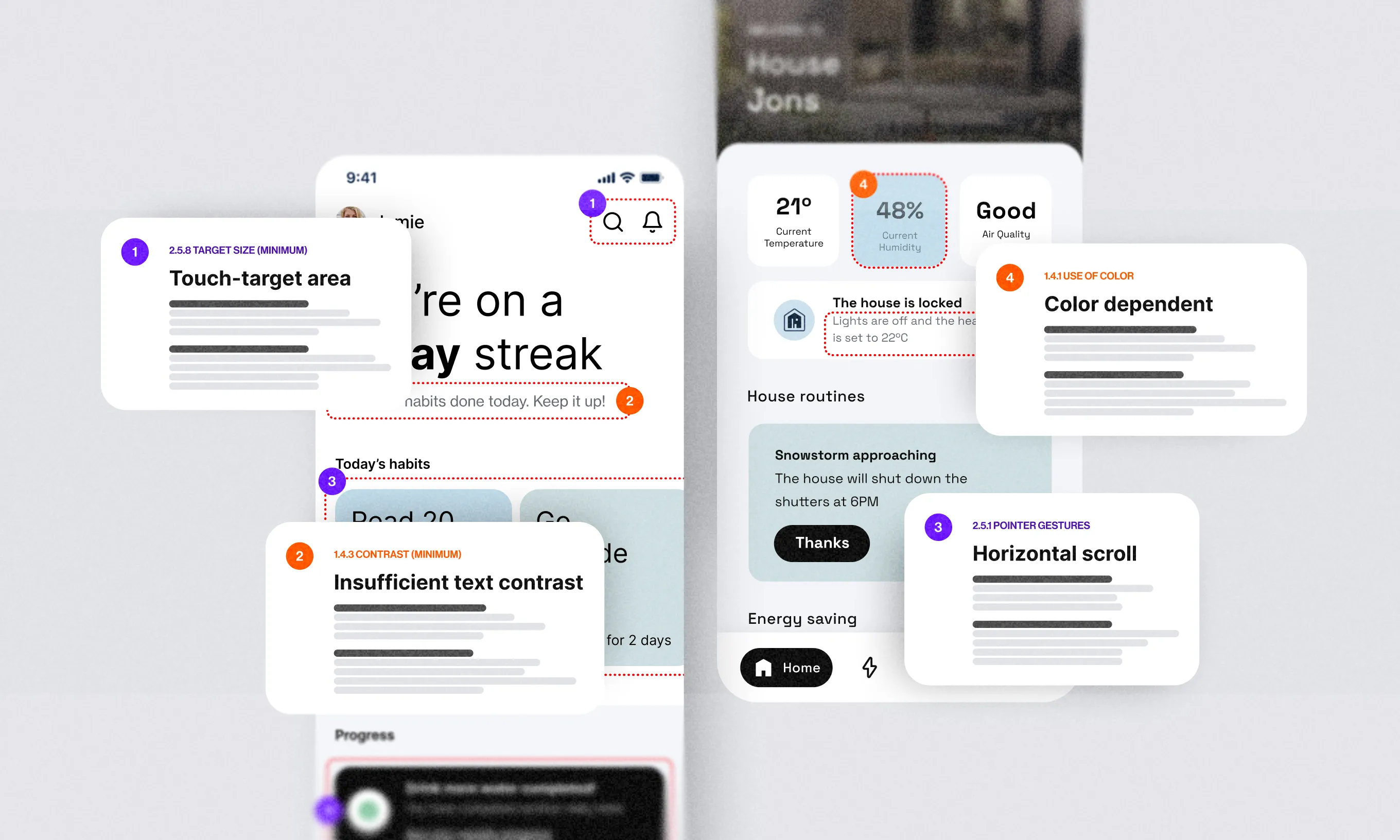

- Applitools, which enhances tests with AI-powered visual differences between screenshots and set baselines.

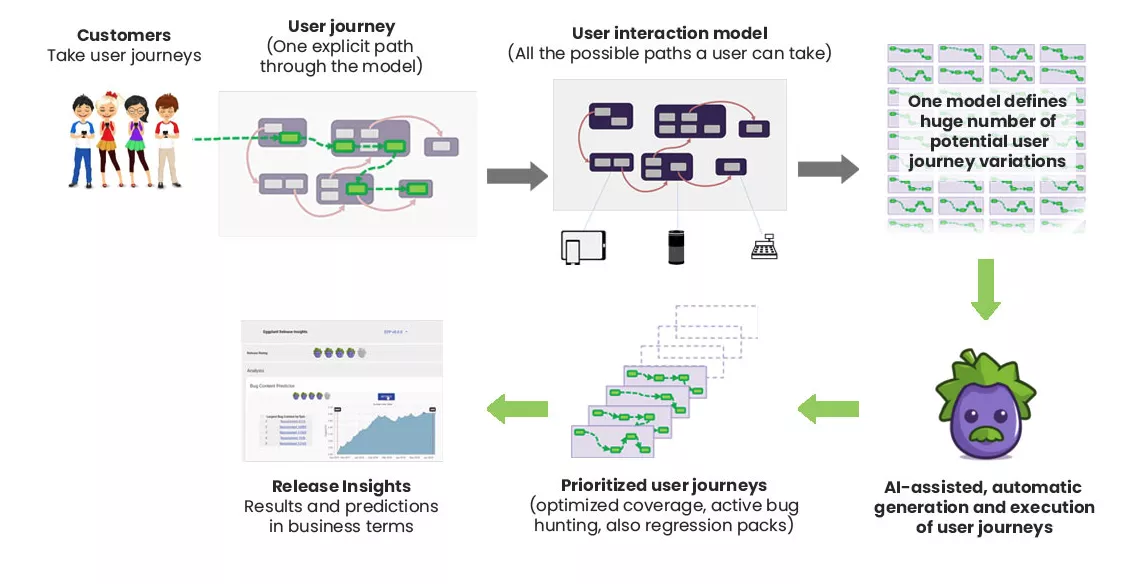

- Eggplant, an AI app with intelligent algorithms for navigating software, predicting defects, and solving issues with advanced data correlation.

- Retest, a UI testing app. Although they mention AI testing in their GUI app solution there is no detailed breakdown explaining how it works other than “AI-based test automation” on the product info page.

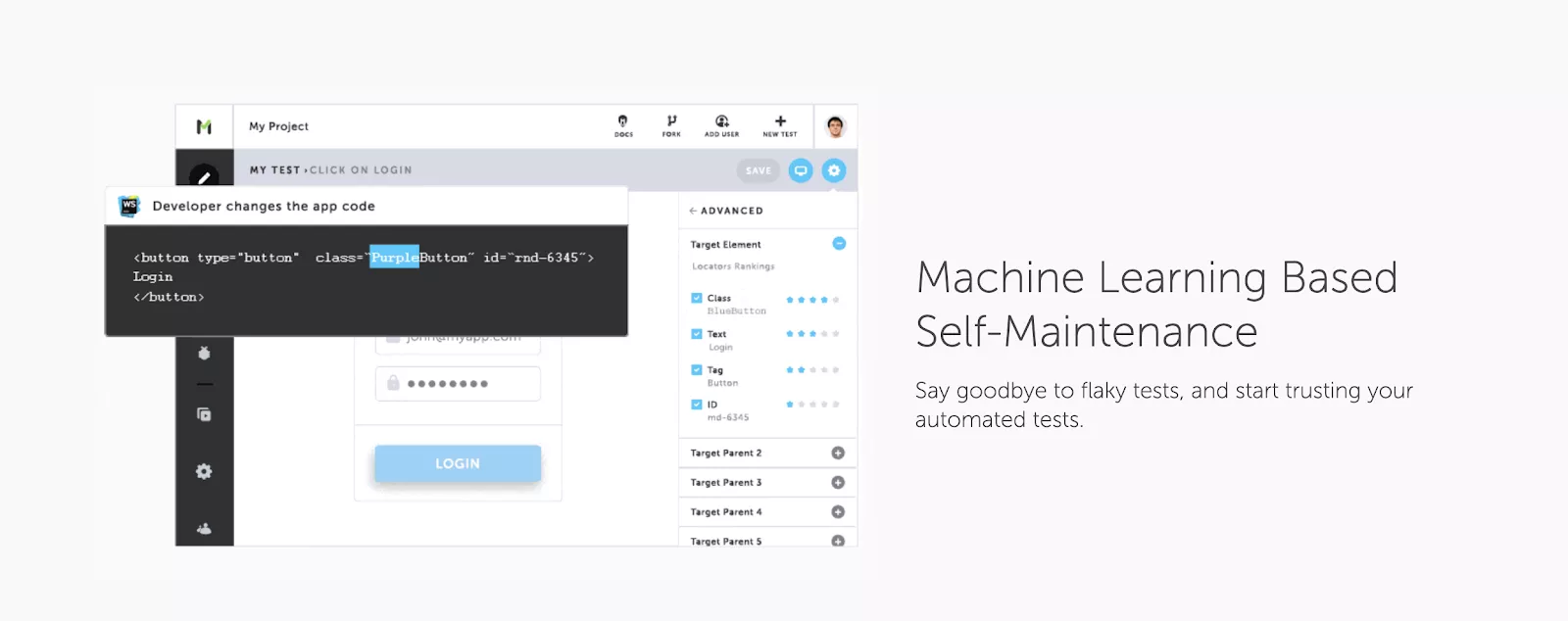

- Testim, a machine learning app which records your app actions and turns them into tests. It uses AI for “speeding up the authoring, execution and maintenance of automated tests.”

- Functionize, an asynchronous and scalable solution where AI helps in making a workflow for test cases that you have either manually clicked, wrote, or imported.

Some of the most noticeable benefits that AI in testing would deliver are improved accuracy, breaking free from the limitations of manual testing, and helping developers and testers be more proficient.

Additionaly, it would increase the overall depth and scope of the project, as well as reduce the cost and time required in order to launch faster.

You can also check out Jeff Nyman’s take on the topic–he has some interesting insights and opinions regarding AI in software testing.

ML/AI vs. “stupid” scripts

Since we are talking about artificial intelligence, we should touch upon the subject of machine learning and the differences between it and AI. Whereas AI mimics and learns from humans, ML learns from raw data and creates self-learning algorithms. It is more strict, rigid, and goal-oriented as opposed to AI.

If you gave an ML model access to a dataset with lots of songs you listen or TV shows you watch, it would feed off of it to generate a recommendation system for future use based on your consumption habits. You may have already guessed that’s something Spotify and Netflix do when they try to create an experience “specifically catered to your taste”.

A myth-come-true?

Traditional automated testing is nothing like that. Tests implemented using Selenium and similar tools are, by definition, stupid.

They will happily miss whatever is not explicitly covered in the test, they have to be vigorously maintained, and generally tend to be quite fragile. This still makes human testers and coders invaluable assets.

Therefore, the main question remains–will these tools ever evolve to the point of becoming so imaginative and empathetic as to make human intervention obsolete? Will this myth become a reality?

Going back to square one, I guess we’ll just have to wait and see.

If you’re interested in using AI for other purposes, namely integrating it into your digital product, check out how we can help you with that.