Snapshot Testing has become a commonly adopted technique for testing user interfaces. The popularity stems from its ability to address the unique challenges of UI testing and the simplicity of setup.

Despite its success in UI testing, snapshot testing hasn’t gained much traction in other areas of software development. This lack of adoption is unfortunate, as many challenges in software development mirror those encountered in UI testing: hard-to-define outputs, complex interactions, and the need for reliable, repeatable tests.

This is especially relevant in the age of AI, where a feedback loop is crucial for autonomous agentic development.

But snapshot testing can be extended beyond UI and applied effectively to audio development. Enter AudioSnapshotTesting, a library developed in collaboration with AudioKit, designed to simplify and streamline the testing of audio processing workflows.

There is no greater impediment to the advancement of knowledge than the ambiguity of words.

Thomas Reid

The idea of snapshot testing was popularised in the iOS community by Facebook’s FBSnapshotTestCase, later renamed and maintained by Uber as iOSSnapshotTestCase. The API of those libraries was constrained to UI testing. Later on, a “delightful” Swift Snapshot testing library has gained popularity. While swift-snapshot-testing made a significant step in generalising the idea of snapshot testing – by providing strategies to “Snapshot Anything” – in practice, companies mostly use it for UI testing.

Snapshot Testing, Golden Master Testing, Characterization Testing, Approval Testing and Reference Testing all describe the same fundamental idea – verifying that software behaviour remains consistent over time. Unfortunately, terminological fragmentation creates artificial barriers, leading to missed opportunities.

To explore Snapshot Testing outside of the UI domain, we will work on a simple metronome app.

Testing Metronome

Building a metronome seems straightforward: generate periodic audio clicks at a specified tempo?

However, when we start implementing one, questions arise quickly:

- How do we verify that the timing is accurate and doesn’t drift over time?

- How do we ensure your audio processing doesn’t introduce artifacts?

- How do we catch regressions when refactoring?

The traditional approach is tedious: build the app, run it and listen carefully. Maybe export audio and inspect it in a third-party tool. Repeat for every change.

Snapshot testing offers a different workflow. Here’s a simple test for our metronome’s audio output:

@Test(

.audioSnapshot(

record: true,

strategy: .waveform(width: 3000, height: 800)

)

)

@MainActor

func testMetronomeOutput() async throws {

let metronome = Metronome(bpm: 120)

let buffer = try metronome.render()

await assertAudioSnapshot(

of: buffer,

named: "metronome-120bpm"

)

}

Running this test with record: true saves the audio buffer to disk as a lossless ALAC-encoded file. The ALAC format produces significantly smaller files than WAV while maintaining audio fidelity. Additionally, while in recording mode, the library generates a waveform visualization that allows us to iterate on our metronome implementation and see changes in the generated image.

Once we have a reference we are confident in, we switch to record: false.

Now the test compares the current output against that reference audio file, failing if anything changes.

This workflow transforms audio development. Instead of rebuilding and running our app dozens of times, we run our test suite. Each test runs quickly and provides the iterative feedback we (or AI agent) need to implement our audio features.

The real power emerges when refactoring. Want to optimize your audio rendering or change your DSP algorithm? The expected output is now covered by tests. If the visual output remains identical, we haven’t broken anything. If it changes, we can immediately see what changed and decide if it’s intended or a bug.

A picture truly is worth a thousand tests

AudioSnapshotTesting Library

AudioSnapshotTesting is designed to work seamlessly with Swift Testing framework, providing specialized support for snapshotting AVAudioPCMBuffer’s with various visualization strategies.

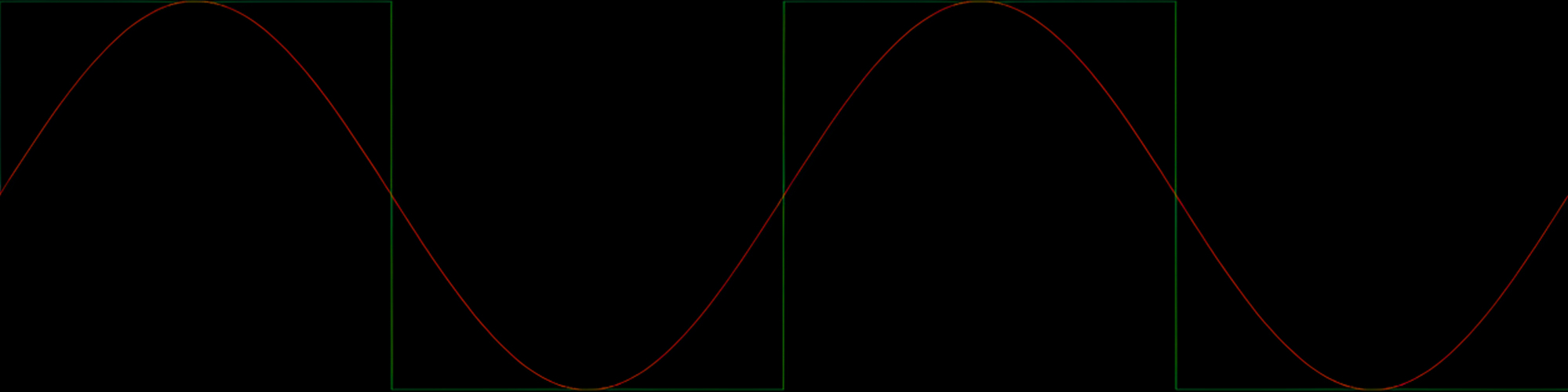

Waveform: Time-Domain Visualization

The waveform strategy provides the familiar amplitude-over-time view. It’s perfect for spotting timing issues, clipping, unexpected silence, or amplitude problems:

@Test(

.audioSnapshot(

strategy: .waveform(width: 3000, height: 800)

)

)

func testSineWave() async {

await assertAudioSnapshot(of: buffer, named: "sine")

}

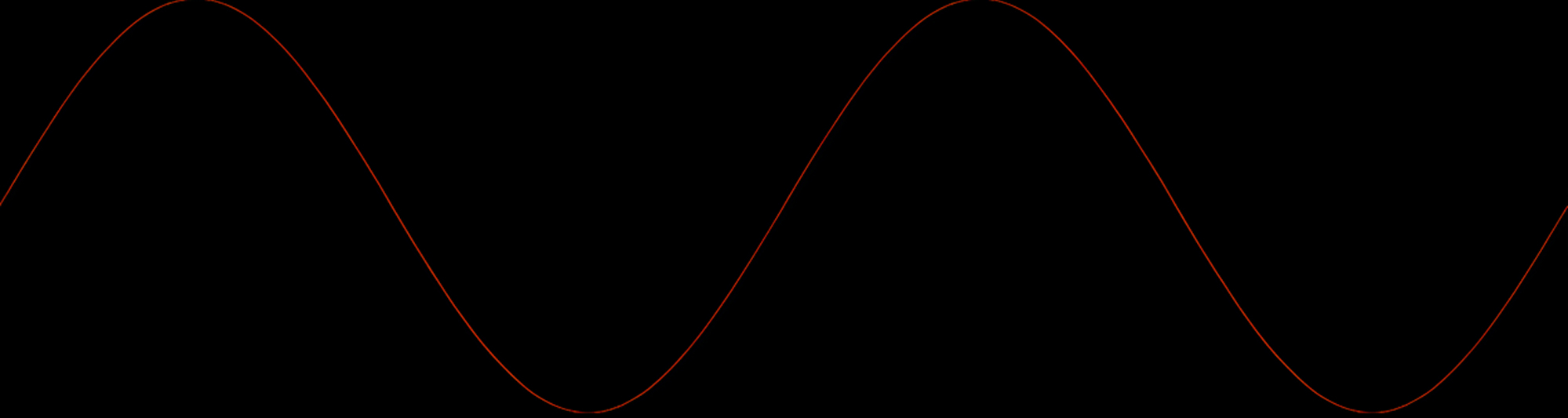

Want to compare two audio buffers visually? The waveform strategy automatically overlays multiple buffers:

@Test(

.audioSnapshot(strategy: .waveform(width: 3000, height: 800))

)

func testComparison() async {

await assertAudioSnapshot(

of: (sineBuffer, squareBuffer),

named: "sine-over-square"

)

}

This renders the first buffer in red, the second in green, making differences immediately obvious.

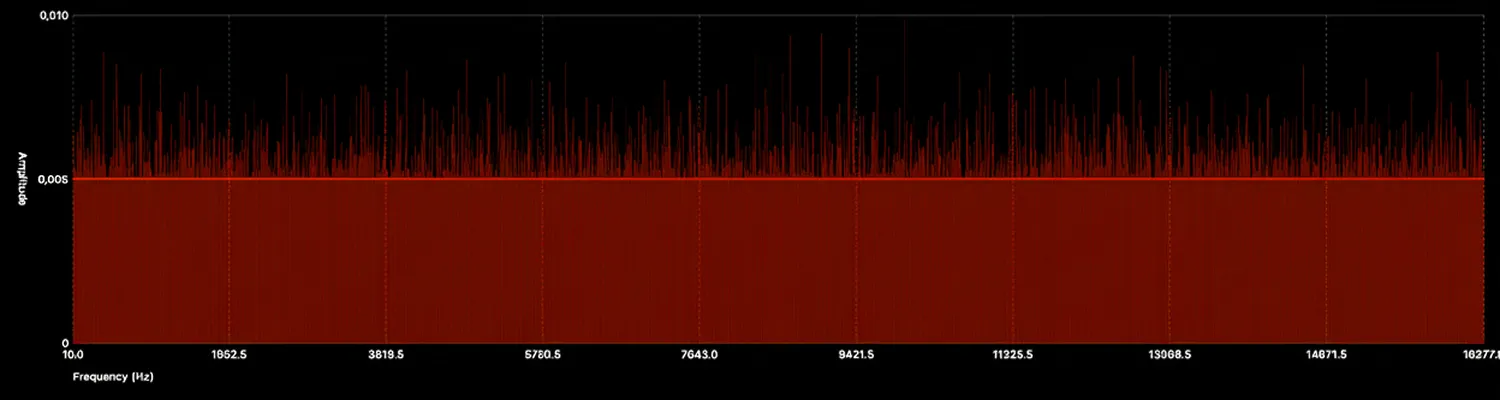

Spectrum: Frequency-Domain Visualization

While waveforms show what happens over time, spectrum analysis reveals frequency content. This is invaluable when testing filters, equalizers, or any DSP that operates in the frequency domain:

@Test(

.audioSnapshot(

strategy: .spectrum(width: 1500, height: 400)

)

)

func testWhiteNoise() async {

await assertAudioSnapshot(of: buffer, named: "white-noise")

}

Spectrogram: Time-Frequency Representation

The spectrogram strategy combines time and frequency analysis, showing how spectral content evolves:

@Test(

.audioSnapshot(

strategy: .spectrogram(

hopSize: 4096,

frequencyCount: 2048,

amplitudeScale: .logarithmic(range: 120)

)

)

)

func testChirp() async {

await assertAudioSnapshot(of: buffer, named: "chirp")

}

Spectrograms are particularly useful for analyzing complex signals, transient events, or any audio where frequency content changes over time.

Streamlined Workflow

For an even smoother development experience, the library offers the autoOpen parameter. When enabled during recording, it automatically opens visualizations in Preview (on macOS), eliminating the need to manually locate and open files:

@Test(

.audioSnapshot(

record: true,

strategy: .waveform(width: 3000, height: 800),

autoOpen: true

)

)

func testWithAutoOpen() async {

await assertAudioSnapshot(of: buffer, named: "test")

}

This is particularly useful when iterating on DSP algorithms—run the test, and the waveform appears instantly for visual verification.

Why a Standalone Library?

While swift-snapshot-testing is an excellent library, we wanted a specialised approach. Audio is about samples—raw numeric data representing sound waves. In AudioSnapshotTesting, sample-by-sample comparison is the source of truth. Visualizations are secondary, generated only on failure or during recording as debugging aids. The audio files are always preserved, allowing inspection in any audio editor.

Try it out

In this article, we’ve shown how snapshot testing serves dual purposes: providing rapid feedback during development and acting as a comprehensive regression suite. The techniques we’ve explored—waveform visualization, spectrum analysis, and spectrogram generation—aren’t new, but integrating them into your test suite transforms them from manual verification tools into automated quality gates.

With audio files always preserved for detailed inspection, configurable bit depth for balancing file size and precision, and workflow enhancements like auto-opening, AudioSnapshotTesting makes audio testing both powerful and practical.

As long-term AudioKit users, we’ve benefited immensely from the community’s contributions to audio development on Apple platforms. We’re thrilled to give back with this tool and hope it helps others build more reliable audio software.

The library is open source and available on GitHub, and we welcome contributions from the community: